AI-Driven Threat Detection: The Future of Cyber Defense

The Obsolescence of Static Defense

The modern

digital landscape is expanding at a velocity that human oversight can no longer

match. For decades, organizations relied on static defense mechanisms—firewalls

and antivirus software dependent on predefined rules and known malware

signatures. While effective against recognized threats, these legacy systems

possess a fatal flaw: they are blind to the unknown.

In an era

defined by zero-day exploits and polymorphic malware, relying on what has

happened in the past to predict future attacks is a failing strategy. This is

where Artificial Intelligence (AI) and Machine Learning (ML) intervene. By

transitioning from signature-based detection to behavioral analysis, AI is not

merely enhancing cybersecurity; it is fundamentally restructuring how

organizations detect, analyze, and neutralize threats.

Beyond Signatures: The Mechanics of Behavioral Analysis

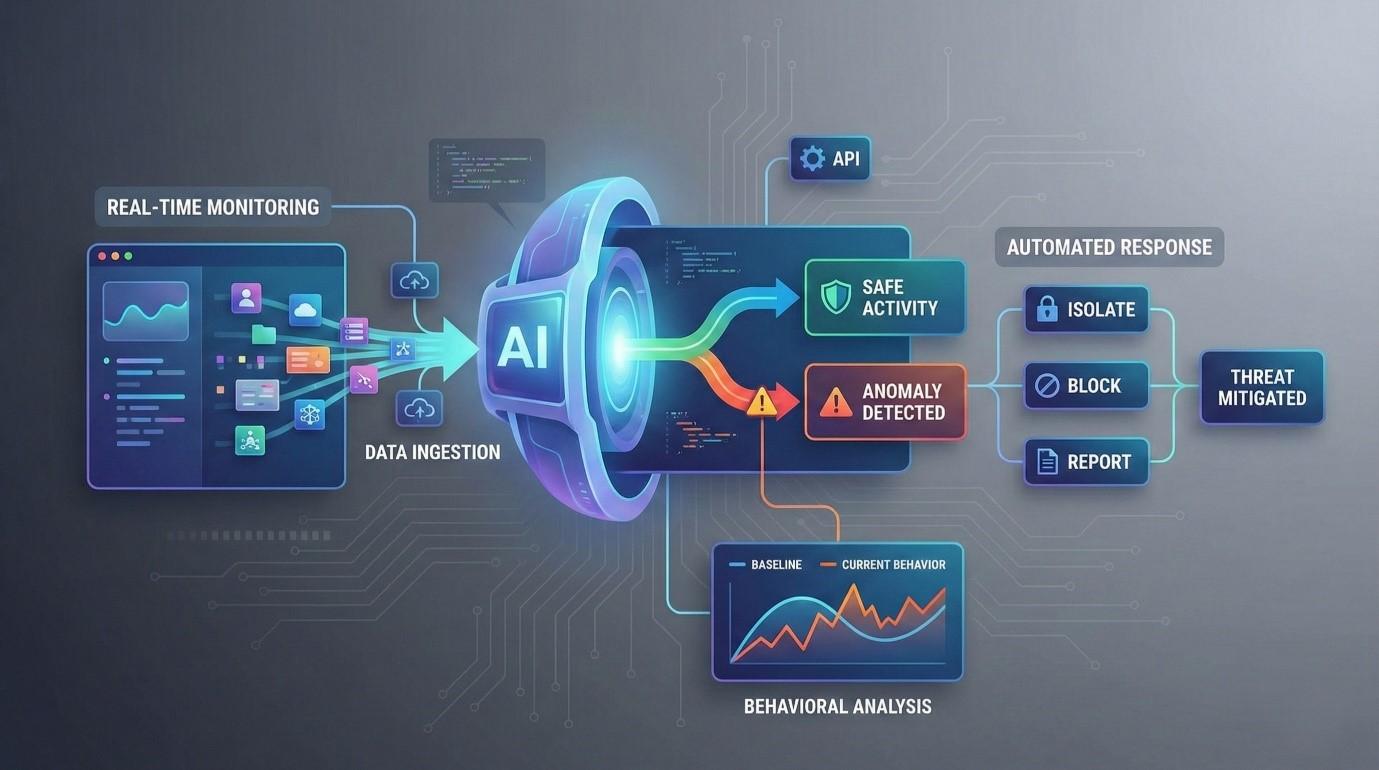

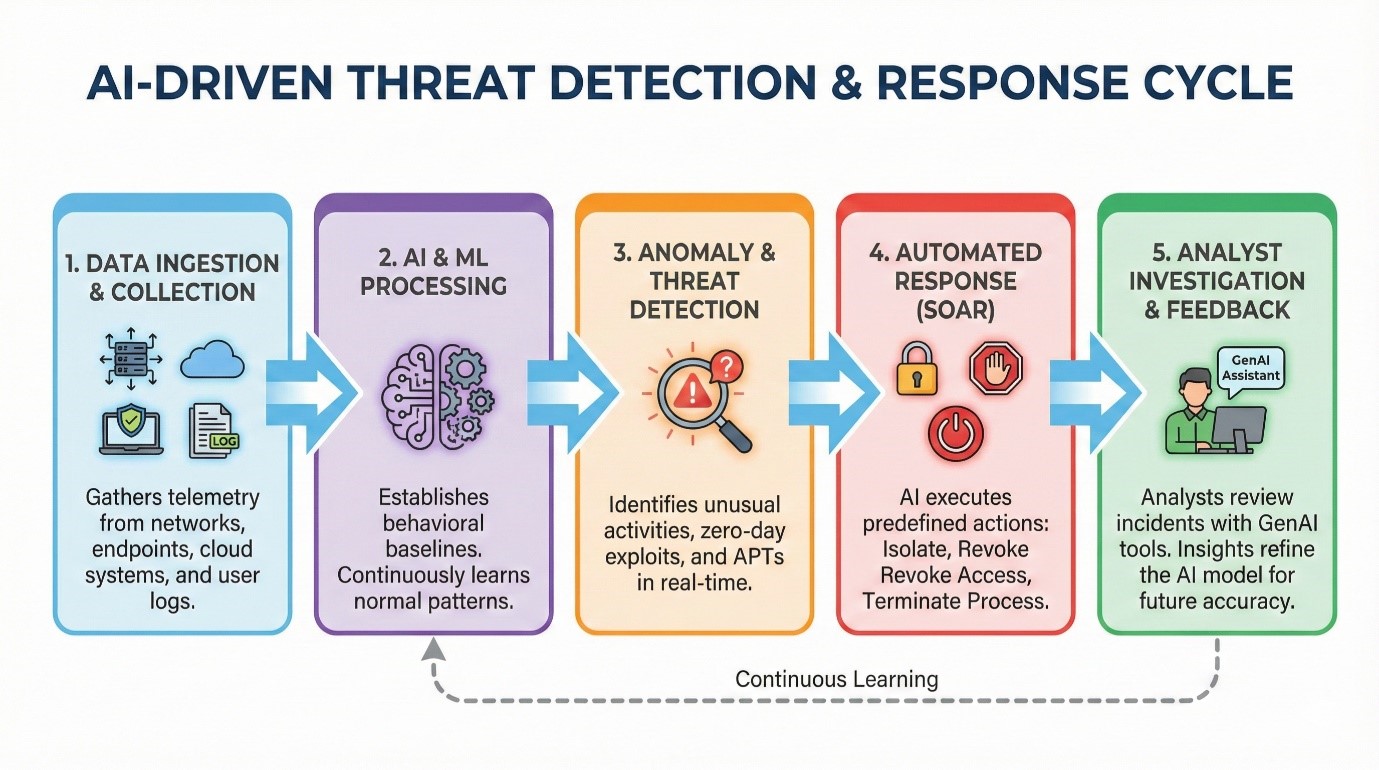

The core

innovation of AI in cybersecurity is its ability to establish a baseline of

"normality." unlike traditional tools that scan for specific

malicious code, AI models ingest vast amounts of telemetry data from endpoints,

network traffic, cloud infrastructure, and user logs.

User and Entity Behavior Analytics (UEBA)

Advanced AI

systems utilize User and Entity Behavior Analytics (UEBA) to monitor day-to-day

operations. Instead of looking for a specific virus, the system learns how a

specific user interacts with the network.

- The Baseline: The AI learns

that User A typically logs in from London between 9:00 AM and 6:00 PM and

accesses specific file repositories.

- The Anomaly: If User A’s

credentials suddenly attempt a login from a different continent at 3:00 AM

and initiate a massive data exfiltration from a restricted server, the AI

flags this immediately—even if the credentials are valid.

This heuristic

approach allows security teams to identify Advanced Persistent Threats

(APTs) and insider threats that successfully bypass traditional perimeter

defenses.

Accelerated Response: Integrating AI with SOAR and SIEM

Detection is

only half the battle; the speed of response determines the magnitude of the

damage. In traditional Security Operations Centers (SOCs), analysts are often

overwhelmed by "alert fatigue," sifting through thousands of flags to

find genuine threats.

Modern AI

integrates seamlessly with Security Information and Event Management (SIEM)

and Security Orchestration, Automation, and Response (SOAR) platforms to

revolutionize incident response metrics.

Reducing Mean Time to Respond (MTTR)

AI-driven

automation can execute defensive protocols without human intervention. Upon

detecting a high-confidence threat, the system can autonomously:

1. Isolate compromised endpoints

from the main network to prevent lateral movement.

2. Revoke user access tokens

immediately.

3. Terminate malicious processes

or scripts running in the background.

The Generative AI Revolution in Security Operations

While machine

learning handles detection, Generative AI (GenAI) is transforming

investigation and reporting. Large Language Models (LLMs), such as those

powering Microsoft’s Security Copilot, are becoming force multipliers for

security analysts.

- Incident

Summarization: GenAI can parse complex log data and generate concise,

human-readable summaries of an attack chain.

- Code

Deobfuscation: Analysts can use AI to explain complex, obfuscated malicious

scripts in plain English, speeding up forensic analysis.

- Guided

Remediation: AI assistants can suggest step-by-step remediation strategies

based on the specific architecture of the victimized network.

This

democratization of knowledge allows junior analysts to handle complex incidents

that previously required senior-level expertise.

Critical Challenges and Risks

Despite its

transformative potential, the deployment of AI in cybersecurity is not without

significant hurdles.

1. The Resource Barrier

Training and

running sophisticated ML models require substantial computational power and

financial investment. For Small and Medium-sized Enterprises (SMEs), the cost

of infrastructure and the scarcity of skilled AI-security professionals remain

high barriers to entry.

2. Adversarial AI and Model Poisoning

The

weaponization of AI is a double-edged sword. Cybercriminals are utilizing AI to

automate attacks, create convincing deepfakes for social engineering, and

develop malware that adapts to evade detection. Furthermore, attackers may

attempt data poisoning—feeding false data to a defense model during its

training phase to blind it to specific attack vectors.

3. The "Black Box" Problem

For AI to be

trusted, it must be explainable. In highly regulated industries, the

"Black Box" nature of deep learning—where the decision-making process

is opaque—poses compliance issues. Organizations must balance performance with

explainability to ensure accountability.

Looking Ahead: The Era of Predictive Defense

As we look

toward the future, the role of AI will shift from real-time detection to predictive

analysis. By analyzing global threat intelligence and internal

vulnerabilities, AI will forecast potential breach pathways before they are

exploited.

However, the

horizon also holds the challenge of Quantum Computing. As quantum

technologies mature, they threaten to break current encryption standards. AI

will play a pivotal role in the transition to post-quantum cryptography,

managing the complexity of these new security paradigms.

Artificial

Intelligence has successfully moved cybersecurity from a reactive posture to a

proactive one. It is no longer a luxury but a necessity for maintaining digital

resilience. While AI will never fully replace human intuition and ethical

judgment, the partnership between human expertise and algorithmic speed is the

only viable defense against the sophistication of modern cyber warfare.

Frequently Asked Questions (FAQs)

Q1: Can AI completely replace human security analysts? No. While AI excels

at data processing and pattern recognition, it lacks the contextual

understanding, ethical judgment, and strategic decision-making capabilities of

human experts. AI is a tool to augment human capabilities, not replace them.

Q2: What is a Zero-Day exploit? A Zero-Day exploit is a cyberattack that targets a

software vulnerability which is unknown to the software vendor or antivirus

vendors. Because no patch exists, traditional signature-based security cannot

detect it, making AI behavior analysis crucial for defense.

Q3: How does AI help with False Positives? Traditional systems

often flag benign activities as threats (false positives). AI and Machine

Learning models continuously learn from analyst feedback, refining their

algorithms over time to distinguish between actual threats and unusual but safe

user behavior, thereby reducing false alarms.